Google DeepMind lifts the lid on a sharper robotics brain

The model specializes in reasoning capabilities critical for robotics, including visual and spatial understanding, task planning and success detection.

Google DeepMind has unveiled Gemini Robotics-ER 1.6, a reasoning model meant to help robots understand the physical world with greater precision, from spatial judgment to task planning and success detection. The upgrade also improves multi-view understanding and adds instrument reading, a capability developed with Boston Dynamics, according to a blog post shared by DeepMind’s Laura Graesser and Peng Xu.

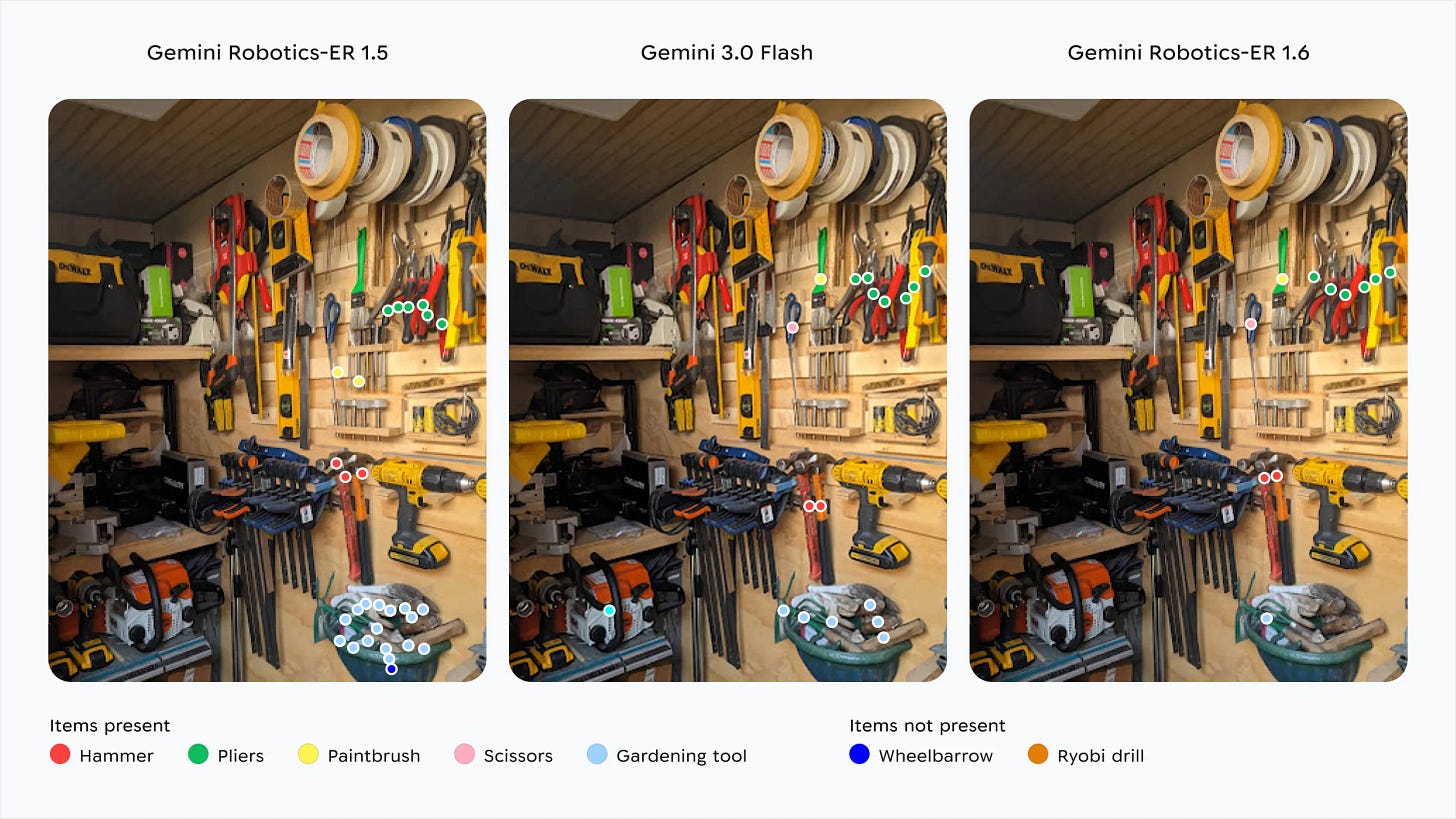

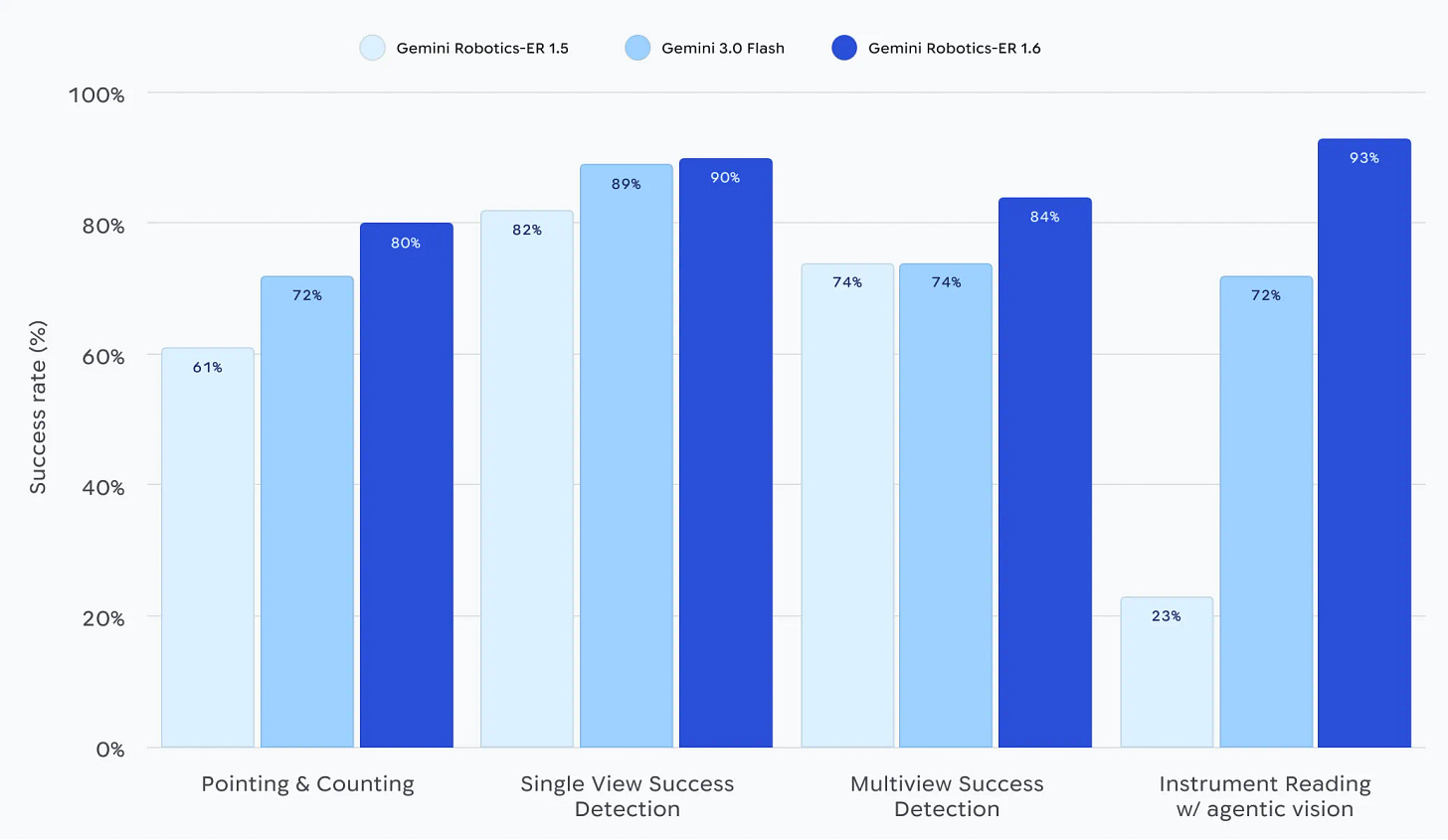

The model is meant to serve as a high-level brain for robots, able to call tools such as Google Search, vision-language-action models and third-party functions as part of its planning. DeepMind says it performs better than both Gemini Robotics-ER 1.5 and Gemini 3.0 Flash on tasks such as pointing, counting and telling whether a job has been completed.

The announcement matters beyond one lab’s technical progress. Robotics has long been constrained less by hardware than by the difficulty of making machines reason reliably in messy, changing environments; advances in embodied reasoning could therefore accelerate use cases in factories, warehouses, inspection and service robotics worldwide.

“We are also unlocking a new capability: instrument reading, enabling robots to read complex gauges and sight glasses — a use case we discovered through close collaboration with our partner, Boston Dynamics,” Graesser and Xu said in the post.

DeepMind frames success detection as central to autonomy, arguing that robots must know not just how to act, but whether an action has worked. It also says Gemini Robotics-ER 1.6 improves multi-view reasoning, allowing the system to relate multiple camera feeds even when scenes are dynamic or partly obscured.

The company is also pitching the model as its “safest robotics model to date,” claiming superior compliance with safety policies on adversarial spatial reasoning tasks. That line will matter to industrial users, who want robots that are not merely clever but predictable. DeepMind says it is inviting developers to submit labelled images showing failure modes, suggesting the work remains a collaboration rather than a finished product.